Ethical AI for Startup Marketing: Minimal Viable OS Guide

Discover how startups can ethically implement AI in marketing. Learn to use a minimal viable OS, build trust, and avoid common pitfalls.

The key to ethically using AI in startup marketing is to begin with a minimal viable operating system (OS)

Just because the same rules of ethical marketing apply to AI in a startup, it doesn’t mean that AI in a startup is the same as “ethics for marketing” but with “AI” taped on. Startups are fast. Startups launch campaigns with a tiny team. Startups have to make hard choices with data that is messy and not intended for machine learning models. You almost certainly do not have a legal team that can vet every claim, every audience segment, and every data transfer. Add all this to a requirement to show growth to investors and you have a perfect storm for unintentional harm, brand-undermining mistakes, and growth-choking mistrust, even with the best of intentions.

In a startup I would describe ethics as a growth hack: safeguard your users, safeguard your brand, and safeguard your growth by minimizing unnecessary risk. This means you don’t approach ethics as a regulatory cost you’ll handle down the road. You approach it like quality assurance for your marketing engine. When you leverage AI to write, target, personalize, qualify leads, or summarize customer data you’re making ethical choices that will either multiply your trust or erode it silently. Ethics is the growth path where you won’t find yourself blown up by landmines you could have recognized. If you’re also thinking about repeatability and process, the social media content calendar can help connect ethics guardrails to a publishing cadence.

In this piece, you’ll get a startup-ready operating model you can run with a small team, including lightweight guardrails and review processes that don’t squash velocity.

I’ll show you how to communicate AI in a clear way, and avoid AI-washing while still coming across ambitious and competitive.

And I’ll walk you through channel-by-channel risk decisions, to help you decide where to go all out on automation, where to keep humans in the loop, and where the risks just aren’t worth the potential gains. If you want a broader context on cadence and tooling tradeoffs, see smart social media automation.

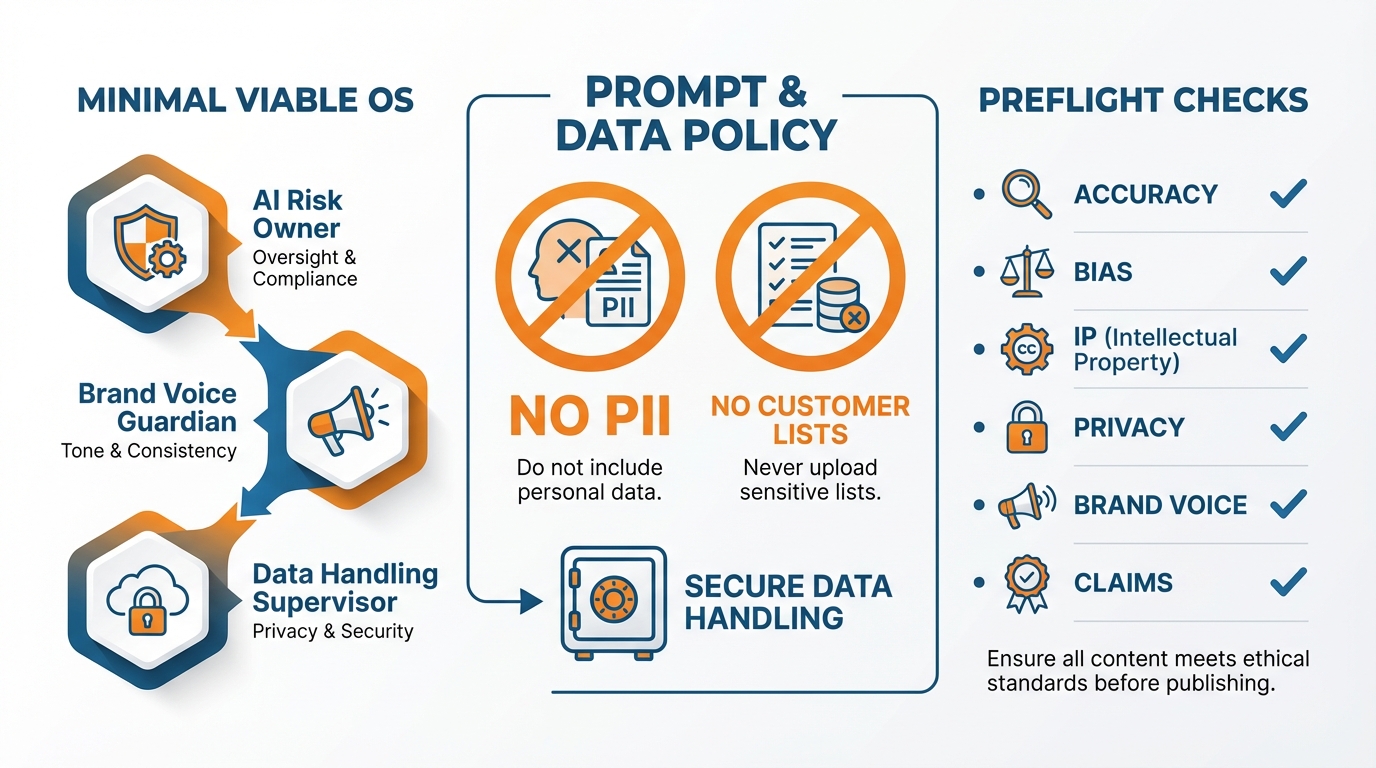

A minimal viable operating system (OS)

One thing we do at Airtable is that AI use in marketing is given an operating system from the very beginning (even if you are just 2-5 people): there is one named owner of AI risk, one named owner of brand voice, and one named owner of data handling.

There is a clear rule that dictates who is allowed to press publish, and clearly defined parameters for what’s OK, what’s off limits, and what always requires a human review.

In general, a human should review anything that includes customer proof, numbers, product performance, comparisons, regulated areas, or any claim that could impact a buying decision.

I approach this like shipping discipline: speed is achieved through clarity, not circumvention of review.

The way to avoid shadow-AI is to keep an inventory that is cheaper to maintain than the disaster that it prevents.

You should list all your AI-enabled marketing interactions: tools, prompts, data sources, what automation pushes it out to users or makes it customized, and whose servers hold your input.

It should be an active document with author and date fields that get updated at least weekly as your tech stack changes.

I also categorize each AI application by risk: low risk (idea generation, drafts), medium risk (customer-facing text and graphics), and high risk (runs off customer data, targeting, makes performance claims, looks like it could be a customer testimonial).

It helps make responsible use of AI in startup marketing a matter of metrics rather than intuition.

Prompt and data policy

The best thing you can do to get your win sooner is have a policy of what data and text you can put into a prompt, because the most likely thing you are going to do is just shove all the context into a prompt and hope it goes away.

Don’t put PII into a prompt. Don’t dump your customer list into a prompt. Don’t tell it anything about a person’s health status, race, religion, political views, or anything else that might be something you’re accused of attempting to infer or misuse.

Don’t dump internal documents, unreleased product information, your private pricing, your investor presentation, your security information, or your support transcripts into a prompt unless you have a good reason to and permission to do so.

If you need to have a model work with your actual customer’s text, sanitise it first: remove names, remove email addresses, remove phone numbers, remove any mention of the customer’s company, and remove any detail from the story that could help re-identify that customer; then retain the original information in your own system and only share the smallest section of it with the model that you need to accomplish your task.

I always assume that a prompt could be forwarded to someone else at some point and I act accordingly.

Preflight checks (minutes, not days)

Before publishing, you do a preflight check that takes minutes, not days: accuracy, bias & representation, IP & attribution, privacy, brand voice, and claims.

Accuracy: you fact-check every fact-like claim, particularly: numbers, integrations, pricing, time savings, and customer results.

If you can’t verify it quickly, you rephrase as an opinion or simply omit.

Bias: you check who is present and absent in examples and images.

You don’t stereotype roles, industries, or geographies.

IP & attribution: you don’t allow AI to fabricate sources and you don’t use AI-generated images that evoke a particular style or person.

If you mention a study or partner, you confirm with the original source.

Privacy: you double-check that no customer names or IDs crept in.

Claims: you don’t use absolute language unless you have proof.

If there’s a mistake: you respond like a product outage.

You remove the asset, document what happened, alert all the people who will get questions about it, and prevent recurrence, typically by further constraining the inventory or adding a required trigger or changing what knowledge is available in prompts.

What ethical AI marketing for startups looks like

What does ethical AI marketing for startups look like?

To market AI ethically you need to do one simple thing: not lie about AI.

Avoid AI-washing.

There’s a second part to ethical AI for startup marketing that nobody talks about: just as you must be ethical in how you use AI, you must be ethical in how you market AI.

You can lose your customer’s trust fast when you don’t put positioning in check and instead let your passion, your competitor’s boasts, and your investor’s fantasies lead you to position your offering as better and bigger than it actually is.

When I’m tempted to exaggerate, I use the following guideline: you can be as aspirational as you want when describing what’s next, but you have to be truthful about what’s here today.

If you do, you’ll have more success with sales because customers will see the difference between positioning that is predictably correct and positioning that is excessively exaggerated, and it’s exaggerated positioning that causes longer sales cycles, more security audits, and more refunds. This matters because a 2024 representative study reported that Experiment I shows lower engagement for identical ads when labeled as AI-generated in research on consumer skepticism and labeling, which underscores how disclosure can affect behavior when trust is fragile (see the study on consumer skepticism of AI-labeled ads).

To keep your message fact-based and still sell, you need a proof bar for every performance verb.

You need to have a baseline before you can say improves, and a measured delta on a specified metric, across more than one customer or dataset, not just a best-case screenshot.

You need to have a definition of true positive and false positive and understand what your model fails to detect, as well as scenarios that are not working as well; in practice that means having a specified evaluation set size, a validation method, and at least one plain-language number like precision or recall, plus a primary failure mode.

You need to have a definition of risk before you can say reduces risk, for whom, by how much, because risk is not a metric unless you attach it to a specific outcome like fewer chargebacks, fraud losses, compliance exceptions, or incidents per month.

You need to know what is fully automated and what is AI-assisted, and what human review is required, and what happens when confidence is low before you can say automates; any hidden human-in-the-loop is a potential trust breach unless it is described explicitly.

The boundary between what you consider a demo, a prototype, and a roadmap item is often where the AI-washing creeps in.

If you’re going to show something with carefully selected data, in perfect conditions, or with some manual polishing, that’s fine as long as you call it a curation-assisted demo rather than a production-level demo.

If I’m showcasing a nascent feature, I always try to tease apart three things in the text: what’s already live/production, what’s available as a constrained private beta, and what’s still on the roadmap.

I’ve found you can communicate all that without deflating the coolness by connecting back to reality: today the AI generates and suggests, and you validate.

Tomorrow it will take action on a few things, but only in a private beta.

Further down the line, it will be more broadly available once it has achieved certain levels of confidence.

That way you can stay in the AI marketing game without falling into the old smoke-and-mirrors trap of suggesting production-level deployments, full automation, and/or 100% precision.

If you include disclosures and caveats in the places where the customer is likely to read them, and don’t blindside them later, this does not need to kill sales.

Position capability caveats next to the capability statement, not in a footnote: pricing page, feature page, and in the product when the user depends on the result.

Surface the caveats that affect purchasing decisions early: data requirement, languages, latency, human review requirement, and word the caveat in specific terms so it looks like an engineering constraint, not a legal freakout.

I also surface what influences the variability, because it preserves trust and cuts churn: performance depends on data quality, industry trends, and edge cases, so you set the expectation that the results will get better as the model absorbs approved feedback.

The objective is straightforward: the customer should never find a capability caveat after they’ve already told their boss this will be hands-off, real-time, and accurate in every edge case. That expectation matches what consumers say they want: a 2024 survey found 76% agree it is important for companies to disclose when their marketing/advertising uses AI (see the ethical marketing disclosure survey results).

Channel-by-channel risk decisions

Whether or not AI in marketing is “right” is really just a case of ethical AI marketing channel-by-channel risk assessment.

For me, the reality of ethically applying AI in startup marketing is less about philosophical decisions and more about balancing the risks and rewards of each marketing channel.

You need a dead-simple risk framework everyone on your team can use to figure out in a second.

low-risk is for brainstorming and variations that are never served without review, medium-risk is for content that customers see but still has to be reviewed, and high-risk is for things that have any potential to impact to whom, what, or how human you communicate.

I keep it fast by linking the risk tolerance to the channel, not the tool:

The same model can be low risk in brainstorming and high risk when it generates facts, citations, or customer proof.

The minimum discipline is to make sure that you don’t hallucinate facts, fabricate references, invent expertise, or mask plagiarism, by having a source for every statement of fact, by prohibiting any reference that you cannot open and check, and by having one human editor who owns the output as if no AI was used.

Paid ads

Paid ads are the place where minor errors are compounded the most, so consider them high risk if AI played a role in targeting, personalization, or audience generation.

We don’t want sensitive-trait inference even when no one is directly asking for it because models and platforms can triangulate with proxies like ZIP codes, interests, and device behavior; that’s where exclusionary outcomes occur without anyone wanting them.

If you’re using lookalikes, keep them broad and try to validate on things like whether your leads are ending up disproportionately in a particular demographic or neighborhood.

Instead, I opt for other targeting options and strategies that are just as effective:

- context-based targeting (i.e., keywords, placements, intent).

- Testing different ad copy variants to determine which copy the users prefer without limiting the type of user who will see the ads.

- And implementing audience targeting settings where I would never target or exclude protected classes.

One key tool in your ethical toolkit in this scenario is measurement: you don’t just monitor CPA; you also monitor concentration metrics like how quickly delivery collapses into a small segment, because that is often the first warning sign that you have optimized your system into an ethical and reputation trap. If your team is building repeatable workflows, this is a good point to connect to social media calendar automation for how process and pacing affect outcomes.

Outbound

With Outbound, a creepy message can kill trust, so separate automation and humanity in the context of privacy, consent, and potential for manipulation.

While AI can assist with summarizing a public company page, creating a neutral first line, or providing subject-line options, leave the tasks that suggest a level of familiarity, deadline, or familiarity with the recipient for humans.

You also need a strict policy that you never feed private inbox history, scraped personal data, or sensitive inferences to prompts, even if it would improve personalization, because the perceived surveillance cost is higher than the conversion lift for most small businesses.

The rule of thumb is: if any person could conceivably read your message and think 'wait, how does he know that?', it's too personalized.

And you scale with integrity by only personalizing around information the prospect has explicitly shared or that is clearly public knowledge.

And writing copy that tries to persuade with relevance, not coercion.

Social and community

Risk to social and community development is medium risk until automation is able to impersonate a human, then high risk overnight.

Sure, you can automate to, say, identify issues, mark questions that require answering, and even create the answers, but you can’t automate activities that resemble sockpuppetry, astroturfing, or a spammer’s engagement footprint.

The platforms and social contexts nail you for that, and the cost of getting a good rep back is astronomical.

I maintain trust by never pretending automated content is real-world experience, or pretending I tried something I haven't, or faking what a community says.

Creative media

Next, creative media: audio, video, photographs.

These can confer credibility and also invite trouble, so it is important to establish your policies on AI-enabled re-creations of likeness, and on endorsement of AI imitations of real people.

Don’t fake a customer testimonial. Don’t fabricate a founder quote.

Don’t make a “real person” a spokesperson for your company unless they’re real and they’ve agreed to it.

If you build growth on a house of fraud, it will come crashing down as soon as someone notices.

Make ethics measurable

Principles are great, but to truly make AI in startup marketing ethical, we need parameters that we can measure.

Responsible AI for startup marketing isn’t squishy once you operationalize principles as a set of controls: audit, risk, mitigate, track, refine.

You begin with a system mindset: everything generated by AI is now a little system, considering what generated this, with what inputs, what’s the data, and where does it go?

Assign a risk category and a default control: speed is now achieved through standardization where low risk is ‘fire away,’ medium risk requires one extra review, and high risk means additional screening as well as more restrictive use of tools and content.

I appreciate this method because it makes ethics actionable: you are not discussing morals in a committee, you are decreasing the risk of an easily avoidable crisis.

You monitor your trust metrics as closely as you monitor your conversion metrics, because that’s where you see the warning signs of ethical drift.

The complaint rate and unsubscribe rate and spam flags and ad disapprovals and drop in deliverability, all of those are signs that the messaging your AI is optimizing is getting too damn creepy or too damn misleading.

On the fairness side, you monitor for bias signals like skewed lead quality or skewed approval rates by geo or by language or by device type or by time of day, because proxy discrimination shows up first as concentration and second as outrage.

I also monitor the error rate in AI copy with a simple weekly spot-check: the percent of outputs that include unverifiable numbers, invented integrations, or fabricated customer proof.

If that percent goes up it is usually a prompt design problem or a missing source-of-truth problem and it’s a lot easier to fix than to repair your trust later. It’s worth remembering that disclosure expectations are high: 86% believe AI-generated content should be disclosed in a survey of 1,100+ U.S. internet users (see the consumer AI disclosure expectation study).

The other aspect of responsible AI use is to treat vendors how you treat your payment processor.

Ask the dull questions early, it will save you grief later.

Specify the data retention policy, ask if the vendor by default uses your data to train, and see if there is a security story you can tell in one paragraph to a risk-averse customer.

Provenance matters even when you are small.

You need to be able to export logs of prompts, outputs, and user actions so you can reconstruct events when an ad gets rejected, an email gets flagged, or a partner challenges a claim.

If a tool can’t answer what happens to the data, how long it’s retained, and how to get access to a record, you aren’t buying AI, you’re buying unknown risk.

Human-in-the-loop fails unless you also define when human-in-the-loop is required and when sampling suffices.

Human-in-the-loop is required on performance claims, comparisons, legal/regulated, customer stories, targeting, personalization based on user data, and anything that can influence a purchase decision.

Sampling can suffice for very large volume, low risk content, provided you teach reviewers how to identify common failure modes quickly: very confident language with weak proof, subtly biased examples, off-putting levels of personalization, non-existent source links, etc.

I’ve seen ethics become a growth advantage because it means you get more distribution: platforms ding you less, co-marketing partners trust you more, online communities let you live there for longer, and your CAC gets better over time because you’re not perpetually paying to outrun the distrust you’re generating. If you’re building repeatability in this area, outsourcing social media to AI can be a useful companion read.

The End

Responsible AI deployment in startup marketing is an ideal until it is a moat. It starts the day you implement it.

You only achieve the advantage when truthfully representing your product becomes the default, handling customers and prospects' information like gold, deciding acceptable risk by communication method, and ensuring a clear, timely appeals process for the algorithms. This aligns with broader findings that people want disclosure: 61.3% of US consumers think media publications should always disclose when content is created by AI (see the AI disclosure expectations data).

This is how you act faster than big companies without building up negative equity in the form of distrust, ad disapproval, email deliverability issues, or community revolts.

Measure trust.

In order to scale responsibly, you need to monitor basic leading indicators to detect when AI-driven marketing is losing direction: increasing unsubscribes, increased spam reporting, increasing need for explanation, increasingly baffled sales conversations, and ads that start getting disapproved.

When any of these indicators is trending the wrong way, don’t discuss philosophical questions, tighten the dials: decrease the automation dial for that media type, introduce an approval gate, and tie every factual claim to an open-in-two-clicks source of truth.

This gives you an additional advantage when you are channel-aware about risk since channels scale error in different ways.

The most stringent protections should remain where compounding occurs the most, like paid targeting, personalization, and anything resembling customer proof.

I’ve seen small tweaks to copy generated by AI lift short-term click-through rates while concurrently ratcheting up the complaint rate or setting off the “creepiness” alarm, and that unpriced risk later manifests as degraded deliverability, longer sales cycles, and more effort spent rebuilding trust. At the same time, AI can perform strongly on outcomes: one study found AI-generated ads outperformed human-created content (59.1% vs 40.9%, p < 0.001), even accounting for a detection penalty (see the research on LLM-generated ads performance).

My final rule is really simple, and it has saved every growth stack I’ve built: if I would be embarrassed to describe an approach to a customer, I wouldn’t scale it, because the damage always outweighs the benefit.

When you follow that rule, AI is what it’s meant to be to a startup: additive growth, not a landmine.

Ethical use of AI in startup marketing is not slower marketing, it is marketing you can keep stacking without eventually having to pay for it twice.

Related reads

4/27/2026

B2B SaaS social media benchmarks: Set the right targets.

B2B SaaS social media benchmarks: Set the right targets. The trouble with defining B2B SaaS social media benchmarks is that it's an inherently impe...

4/25/2026

Building a personal brand as a technical founder (without becoming a creator)

Building a personal brand as a technical founder (without becoming a creator) Creating a personal brand as a tech founder should feel less about ma...

4/20/2026

Creative Post Ideas for Sustainable Brands (That Don’t Sound Greenwashy)

Creative Post Ideas for Sustainable Brands (That Don’t Sound Greenwashy) That’s not what creative post ideas for sustainable brands should look lik...